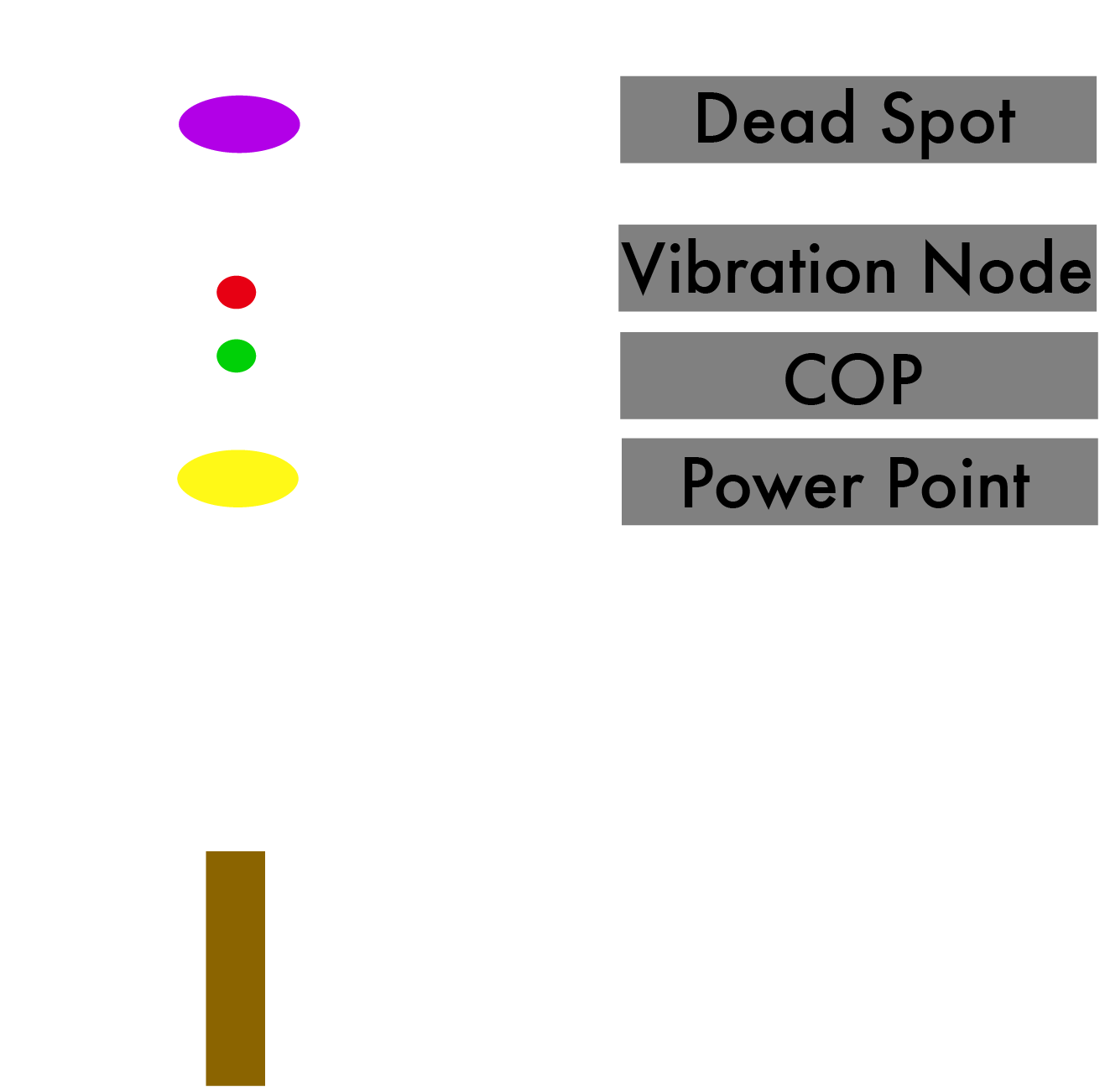

I want to explore the physical manifestation of sound. Specifically, I'm interested in the field of cymatics: visualizing sounds. There's many different ways of performing one's own cymatics experiment, with different materials and set-ups. I'm interested in using a driver mounted on a speaker, which is then attached to a metal plate. When sound frequencies are played through the speaker, the plate will vibrate, causing the material on the plate (any solids or liquids) to visualize the vibrations, or sound frequencies.

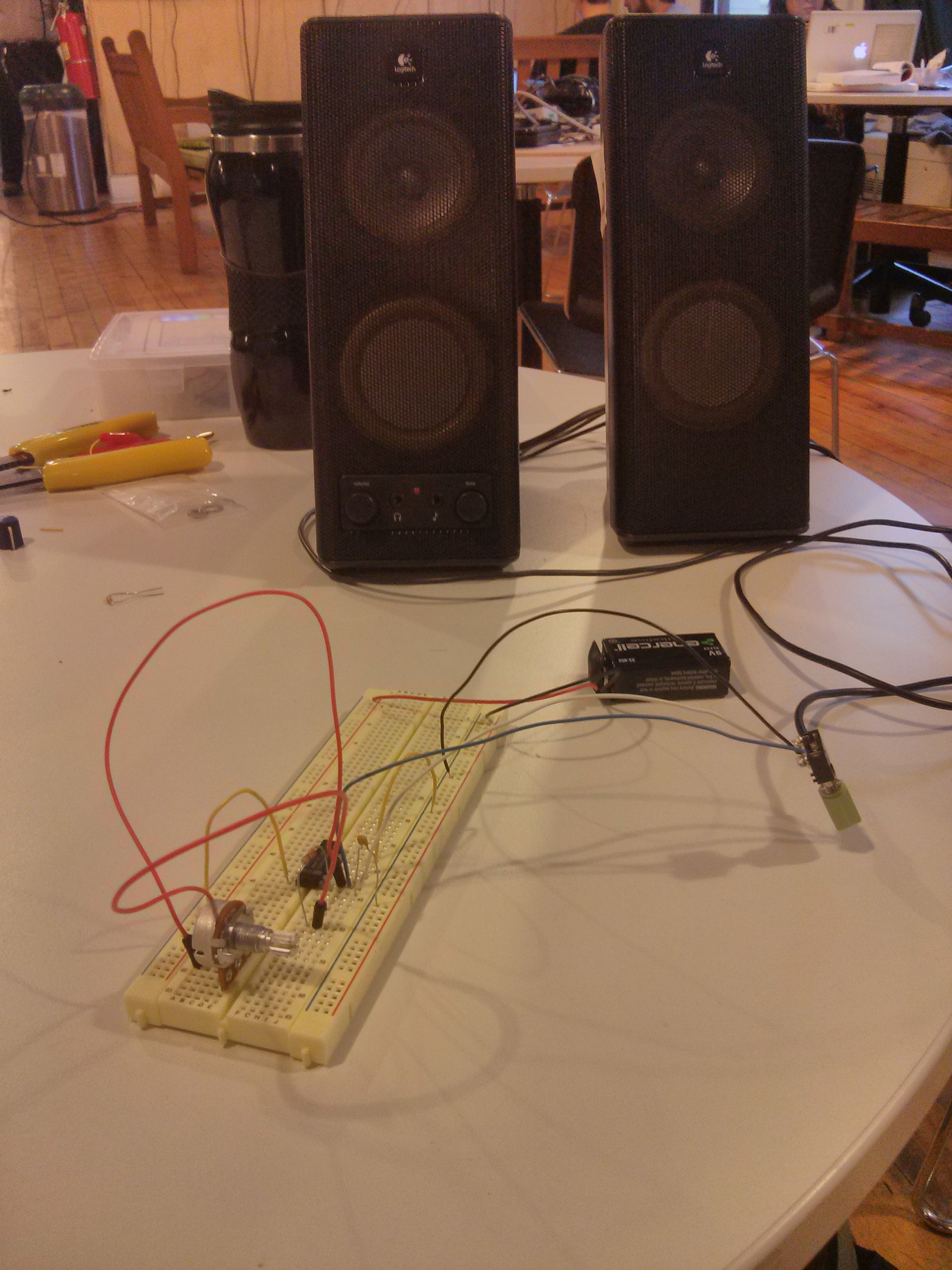

I started performing my own experiment using an old speaker I found at ITP's junk shelf and a plastic container with some water.

This is just a proof of concept. I would like to amplify the sounds to get stronger vibrations, use a metal plate because metal has better resonance than plastic, and use a solid material like sand in order to give the visualization of the sounds greater permanence.

This concerns the visual output of my project. For the input (what triggers the sounds/vibrations) I wanted to use my tennis racquet recordings, in order to visualize the frequencies of tennis racquets. The concept would include two metal plates, each reacting individually to two separate tennis racquets which are playing out a match, visualizing the tennis match through sound.

My concern is that the sound of a tennis racquet hitting a ball lasts only about 1 second, producing incredibly short-lived vibrations. The patterns in water or sand caused by the vibrations take place through sustained frequencies, so 1-second sound clips will not suffice to generate interesting visuals. I'm considering extending the length of the recordings in order to draw out the sounds I recorded, but then I'm afraid I'd be losing the sonic quality of the tennis racquet hitting a ball.

Another approach to this would be using gestures to generate different sound frequencies, which would then be visualized through cymatics. I think this could be a playful way to learn about sound physics, while generating interesting visual patterns. I could implement this using the Leap Motion and Processing to map hand gestures to different sine wave frequencies, and play these sounds through the speakers in order to visualize them.